Hello,

I’ve got what feels like a simple-but-long-winded n00b question that I’m hoping @mercenary_sysadmin or someone else might have some insight on.

Really quick, this is my setup for QEMU-based VM disk images in Proxmox:

- Pool

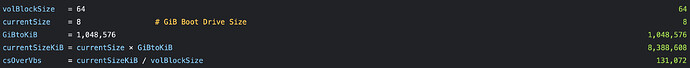

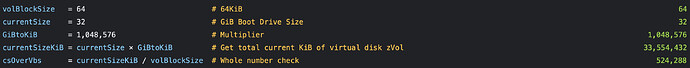

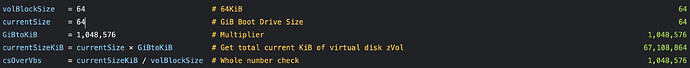

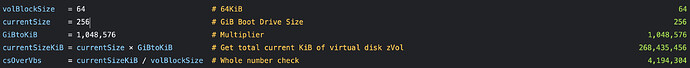

ashift: 12 - Volblocksize for general-purpose VM virtual disk: 64k

- Typical VM drive size: 32 or 64 GiB; 256 GiB (Windows 11).

Question: I assumed that as long as I used ashift=12 and volblocksize=64k, I didn’t have to worry about my QEMU zvols getting out of block alignment (is that the right way to phrase it?). Is that not correct?

How do I verify my block alignment is correct after the fact, on zVols I’m using for VMs?

What got me thinking about this?

I’m watching the development of the new iSCSI and NVME-over-TCP storage plugin for Proxmox that allows using zVol-backed iSCSI/NVME-over-TCP as shared storage for Proxmox nodes:

I’m interested in this, as I have a Proxmox node that doesn’t have a ton of internal storage and would really like to use ZFS over iSCSI to store its VM virtual disks.

I just saw a bugfix that caught my attention. Fix VM migration size mismatch by WarlockSyno · Pull Request #28 · truenas/truenas-proxmox-plugin · GitHub

What’s happening:

When Proxmox allocates the target disk on TrueNAS,

alloc_imagerounds the requested size up to the next multiple ofzvol_blocksize. The problem is QEMU’s block mirror checks that source and target block devices are the exact same size. If the source disk isn’t already aligned to your configured blocksize (16K, 128K, etc.), the target ends up a few KiB larger and QEMU bails immediately.In this specific case the VM’s disk was 419430856 KiB, divisible by 8K but not 16K or 128K. Both TrueNAS storages had larger blocksizes configured, so both targets came out bigger than the source by 8-57 KiB depending on the storage.

Why the rounding exists:

It was added to pre-align zvol sizes to the configured blocksize for ZFS efficiency. The logic makes sense for fresh disk creation, but it breaks migrations from storage backends that don’t share the same alignment.

The proposed fix:

Instead of rounding

$bytesup, step the volblocksize down by halves until it evenly divides the requested size. Since every byte count is divisible by 512, this always terminates. A perfectly-sized zvol gets created with the full configured blocksize (no behavior change for normal disk creation); a misaligned one gets a smaller blocksize that fits exactly.

I understand problem (zvol volblocksize mismatch), and the solution–even if I would prefer to be warned about the issue and told to fix it myself rather than have an automated algorithm transparently change the volblocksize without telling me.

Fixing the underlying problem (if it exists) seems better than forcing a volblocksize change?

But what I don’t understand is this:

If the source disk isn’t already aligned to your configured blocksize (16K, 128K, etc.), the target ends up a few KiB larger and QEMU bails immediately.

That implies that I need to select specific virtual disk sizes that are divisible by my chosen volblocksize to aoid misalignment. That’s not a constraint I’ve been aware of up to now. Am I misinterpreting something? If not, what’s the best way to determine what disk size multiples go with a specific blocksize?

Is it as simple as just dividing the total number of KiB allocated to a VM’s virtual disk by the volbocksize? And if so, how do I determine that total number of KiB for zvols on a a thin provisioned pool?