I recently did some work to downsize a ZFS pool that’s just for home use from 10x smaller LUKS-encrypted disks to a single mirror zpool of 2x 8TB drives, along with a single SSD as a scratch disk as its own single-disk zpool. Both are encrypted using ZFS native encryption.

When migrating I moved from LUKS to ZFS native encryption because it made sense to me to give ZFS more direct access to the drives, rather than presenting it the ‘virtual’ device that cryptsetup creates.

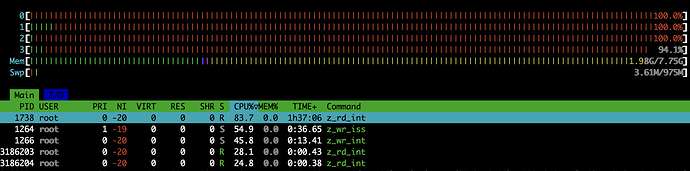

I’ve noticed that moving files between the SSD and mirror pool (just a simple mv or rsync) will boost the CPU usage of the host up to nearly 100% even for a simple copy operation.

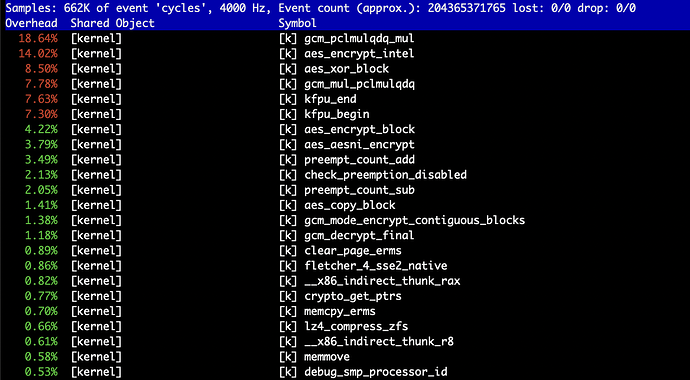

This seems like quite a high level of CPU usage for a simple copy operation, even at the ~250MB/s the HDD mirror can write at, so it got me wondering whether ZFS native encryption actually fully supports using encryption acceleration instructions from the CPU. I’ve tried searching and I can find some fairly conflicting info about support, probably since much of it is quite old at this point.

This is a Proxmox 8 VM with 4x vCPU cores from a Xeon E3-1240 v2, with disks attached to the VM via a directly passed-through LSI SAS2008-based controller. I’ve made sure that Proxmox is running in host mode for the CPU and that /etc/cpuinfo in the VM does show the aes instructions as available.

This is a Debian 12 VM installed using the ZFS package from the standard Debian stable repos.

What I’m wondering is:

- Does ZFS actually use AES-NI for native encryption when available?

- Is the ZFS package that builds itself using DKMS (I think) going to be any different in terms of this support than something like Ubuntu or Alpine where the binary package is built by the upstream? I’m happy to move to either if I have to but I haven’t tried yet since I’d rather avoid the headache if possible.

Thanks for any info anyone’s got, even if it’s just pointers to more recent documentation that I’m not able to find!

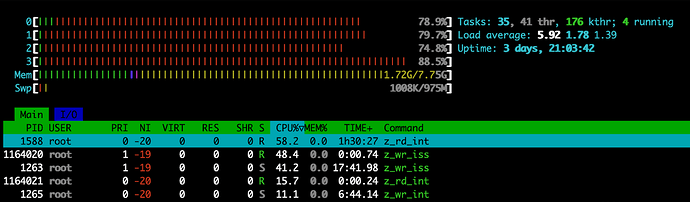

Edit: I thought it might be helpful to include a snapshot of what it looks like in htop when the system is running a heavy copy operation. Seems to be the z_rd_int and z_wr_iss kernel threads consuming most of the CPU time:

That one’s not quite pegging the CPU to 100% I think because the example I was trying here involved copying a lot of medium files rather than one large one, but it gets the point across at least.